The fact that artificial intelligence can play games is nothing new. Chess algorithms in particular have already become famous, but an AI is also successful in StarCraft 2. openAI It’s now possible for the first time to allow an AI to produce diamond pickaxes in Minecraft like never before Singularity Hub informed of.

First AI-Generated Diamond Pickaxe

The San Francisco AI company isn’t making the first AI forays into the world of blocks, but past efforts from other developers haven’t necessarily gone far. The difficulty lies in the non-linear gameplay in a procedurally generated game world without clear goals – very different from a chess board or a StarCraft map. in one blog entry The nine-strong development team now explains how a neural network was built based on nearly 70,000 hours of Minecraft videos on YouTube. Several short shots show the AI in action – for example when climbing around, looking for food, smelting ores or crafting objects in the workspace.

Special feature: All this happens in survival mode, while before minecraft ai Those who were often in creative mode or worked in specially optimized, simplified input environments. For example, in an AI competition mineralsIn which none of the 660 teams participating in 2019 found diamond mining.

To the best of our knowledge, there is no published work that operates in the full, unmodified human action space, including drag-and-drop inventory management and item crafting.

openAI

OpenAI, meanwhile, emulates classic mouse and keyboard input just like a real gamer. However, the latter will not like the refresh rate used: the algorithm simulates with only 20 fps to achieve with less computing power.

Video pre-training keeps Nvidia Volta and Ampere busy for days

that paper freely accessible on the project, as is Code via GitHub, The researchers describe the development of their artificial intelligence in detail. They call their methodology video pretraining. First, nearly 2,000 hours of Minecraft video were manually logged, showing what the player is performing at any given moment—i.e. what keys they press, how they move the mouse, and what they’re actually playing in the game. does. he became one inverse dynamic model Trained to provide suitable description for 70,000 more hours of Minecraft video.

Originally, an algorithm was first written that tried to identify which player’s input led to the sequence shown in the game sequences fed to him. The individual tasks were separated and the gameplay was considered before and after. It’s much easier for AIs to classify what’s happening based on the past than it is – the algorithms in their models don’t have to first try to guess what the player is actually planning to do in the video. Yes, the researchers say.

To utilize the wealth of unlabeled video data available on the Internet, we introduce a novel, yet simple, semi-supervised simulated learning method: video pretraining (VPT). We start by collecting a small dataset from contractors where we record not only their video but also the actions taken by them, which in our case are keypresses and mouse movements. With this data we train an inverse dynamics model (IDM), which predicts the action to be taken at each step in the video. […]

We chose to validate our method in Minecraft because it is first one of the most actively played video games in the world and thus has a wealth of freely available video data and second is open-ended in which to play. There are a variety of things for real-world applications such as the use of computers. […]

openAI

A total of 32 Nvidia A100 graphics accelerators were used to determine which inputs corresponded to which actions – the Ampere GPU still needed about four days to view and process all the content. Independent logging of 70,000 hours of Minecraft video took even longer, at about nine days, although the full 720 Volta Tesla V100 GPU was used.

Practical cloning brings the first stone pickaxe

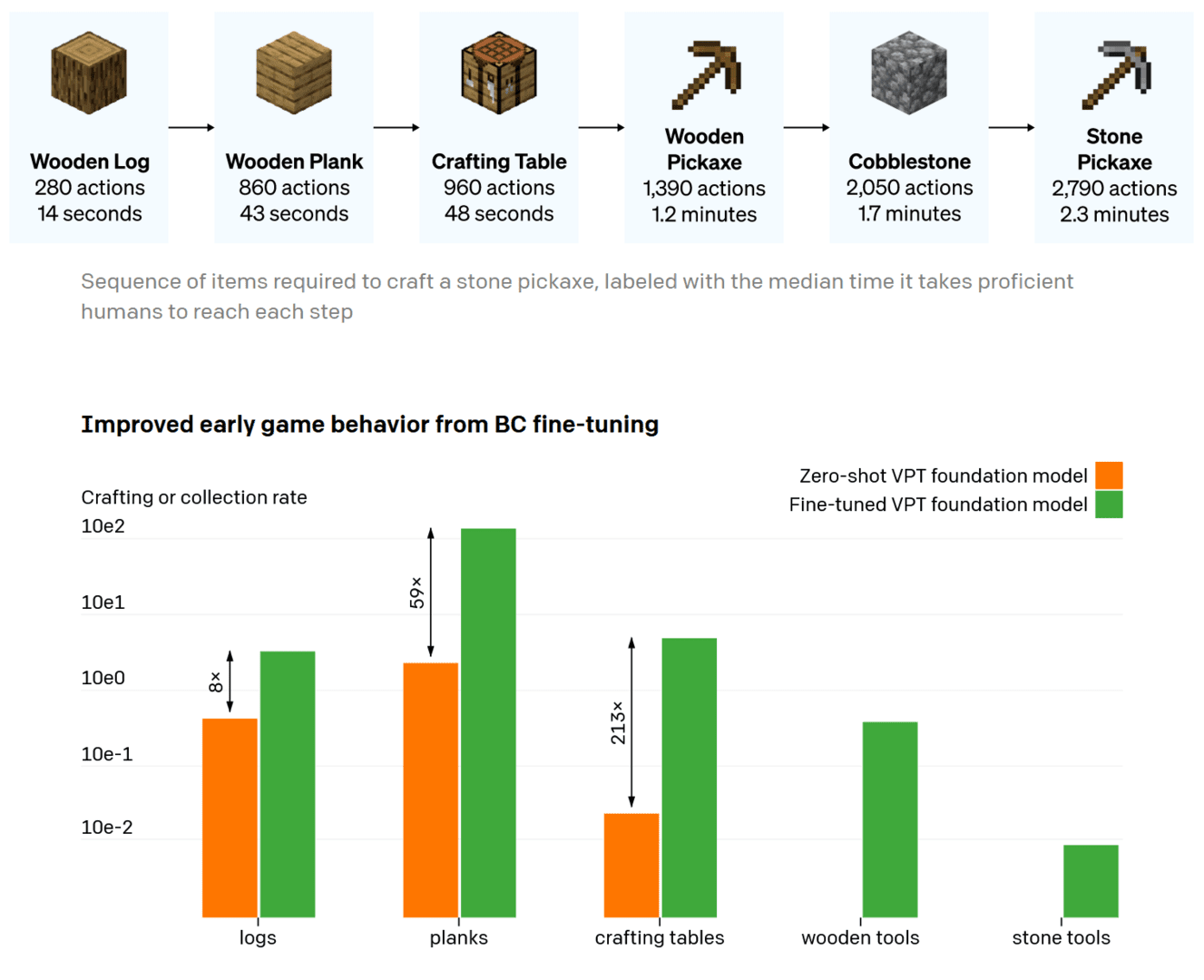

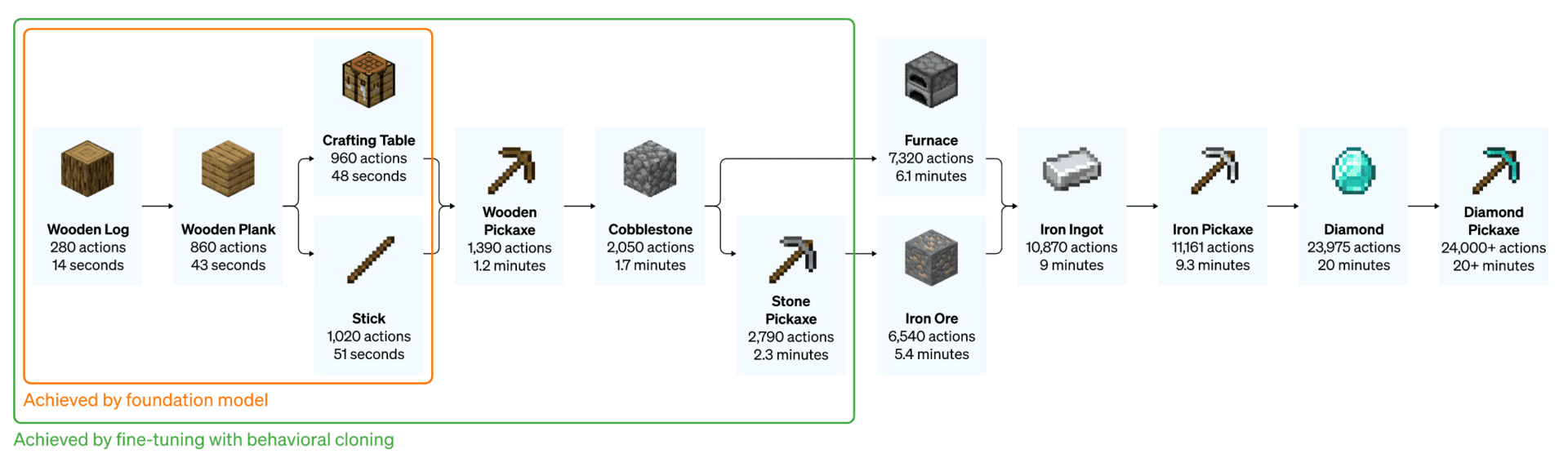

Result: The neural network manages to move around in Minecraft, cut down trees, process wood, and even build a workspace. But it didn’t go far enough for OpenAI developers; with the concept of behavioral cloning AI needs to be more optimized. To train it for a specific behavior the algorithm had to be fed fine-tuning with more specific gameplay – for example building a small house or creating a specific course of the first ten minutes of the game. The A100 graphics card was used again, but this time there were only 16 of them. It took them about two days to calculate.

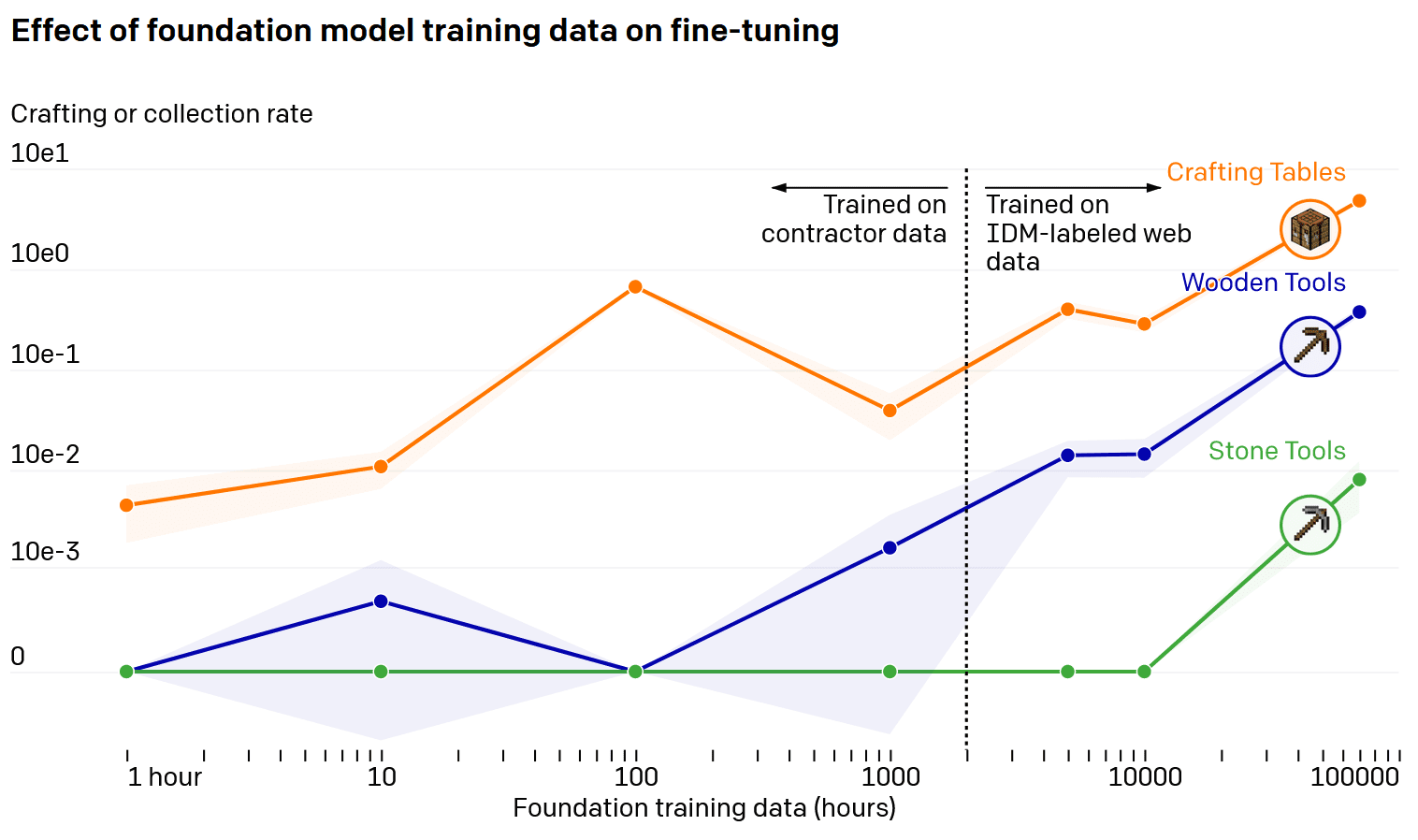

And indeed: the researchers saw significant improvements in their AI. For example, it took only an eighth of the time to cut down the first tree. The efficiency of fabricating wooden planks increased by 59; This was also a factor of 215 when creating a workspace. Furthermore, neural networks now made wooden tools, carved stone and then made a stone axe. For this purpose, the algorithm was fed with about 10,000 hours of video – for wooden tools, on the other hand, 100 hours of training was sufficient.

Reach Your Goal With Reinforcement Learning

As the next and final step, the OpenAI team eventually chose to further fine-tune reinforcement learning, Training was now even more specific, and the AI was given a clearly defined goal: it should make a diamond pickaxe. Apparently this involved the discovery, mining and melting of iron ore, making iron axes and eventually the discovery of diamonds. Thanks to targeted training, the neural network was able to do all this much faster than the average Minecraft player: after about 20 minutes the diamond pickaxe was ready.

One difficulty was the intermediate target: if you gave the AI the task of looking for iron from the stone ax you just made, you dug it straight down after crafting it and left the workbench behind—the iron afterward. As a result, a more intermediate goal was necessary: removing and raising the crafting table; In addition, different targets had to be weighted differently.

Once again, processing the necessary gameplay stages required a lot of computing power: 56,719 CPU cores and 80 GPUs were busy with machine learning for nearly six days. The algorithm went through a total of 4,000 sequentially optimized iterations, with a total of 16.8 billion frames read and processed.

Further application inside and outside minecraft conceivable

Nine researchers see great potential in the newly tested video pretraining method. The concept can be easily transferred to other situations, it is said, and applications outside of video games are also conceivable. The advantage here is that AI was designed to handle mouse and keyboard input. Further development of neural networks, however, also depends on the network starting on 1 July. MineRL Competition 2022, Participants are allowed to use OpenAI models to train artificial intelligence for other specific goals – perhaps this will soon lead to the first Netherland pickaxe.

VPT paves the way for allowing agents to learn how to act by watching a large number of videos on the Internet. Compared to generative video modeling or paradoxical methods that would only achieve representational priors, VPT offers the exciting prospect of directly learning behavioral priors on a large scale in domains more than just language. While we only experiment in Minecraft, the game is very open and the basic human interface (mouse and keyboard) is very generic, so we believe our results are good for other similar domains, such as computer use.

We are also open sourcing our contractor data, Minecraft environment, model code and model weights, which we hope will aid future research into VPT. In addition, we have partnered with the MineRL NeuraIPS competition this year. Competitors can use and fix our models to try to solve many difficult tasks in Minecraft.

openAI

Was this article interesting, helpful, or both? The editors are happy about any support for ComputerBase Pro and disabled ad blockers. More about ads on Computerbase.

Internet fan. Alcohol expert. Beer ninja. Organizer. Certified tv specialist. Explorer. Social media nerd.